Mixture-of-Experts in Remote Sensing: A Survey

Article Information

Abstract

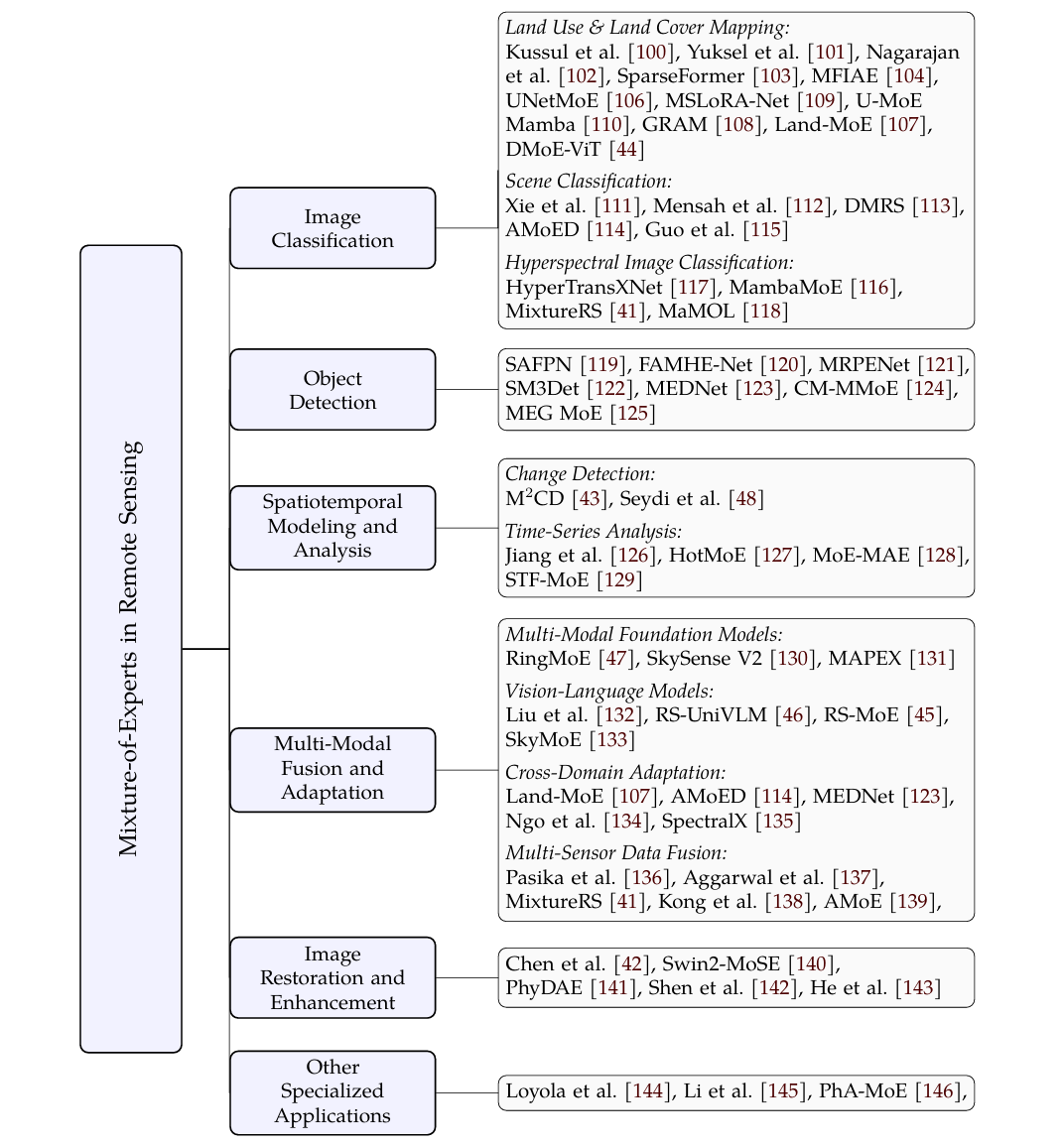

Remote sensing data analysis and interpretation present unique challenges due to the diversity in sensor modalities and spatiotemporal dynamics of Earth observation data. Mixture-of-Experts (MoE) model has emerged as a powerful paradigm that addresses these challenges by dynamically routing inputs to specialized experts designed for different aspects of a task. However, despite rapid progress, the community still lacks a comprehensive review of MoE for remote sensing. This survey provides the first systematic overview of MoE applications in remote sensing, covering fundamental principles, architectural designs, and key applications across a variety of remote sensing tasks. The survey also outlines future trends to inspire further research and innovation in applying MoE to remote sensing.

Graphical Abstract

Keywords

Data Availability Statement

Funding

Conflicts of Interest

AI Use Statement

Ethical Approval and Consent to Participate

References

- Zhu, X. X., Tuia, D., Mou, L., Xia, G.-S., Zhang, L., Xu, F., & Fraundorfer, F. (2017). Deep Learning in Remote Sensing: A Comprehensive Review and List of Resources. IEEE Geoscience and Remote Sensing Magazine, 5(4), 8-36.

[CrossRef] [Google Scholar] - Ma, L., Liu, Y., Zhang, X., Ye, Y., Yin, G., & Johnson, B. A. (2019). Deep learning in remote sensing applications: A meta-analysis and review. ISPRS Journal of Photogrammetry and Remote Sensing, 152, 166-177.

[CrossRef] [Google Scholar] - Zhao, S., Tu, K., Ye, S., Tang, H., Hu, Y., & Xie, C. (2023). Land Use and Land Cover Classification Meets Deep Learning: A Review. Sensors, 23(21), 8966.

[CrossRef] [Google Scholar] - Gao, J., Li, P., Chen, Z., & Zhang, J. (2020). A Survey on Deep Learning for Multimodal Data Fusion. Neural Computation, 32(5), 829-864.

[CrossRef] [Google Scholar] - Osco, L. P., Junior, J. M., Ramos, A. P. M., de Castro Jorge, L. A., Fatholahi, S. N., de Andrade Silva, J., ... & Li, J. (2021). A review on deep learning in UAV remote sensing. International Journal of Applied Earth Observation and Geoinformation, 102, 102456.

[CrossRef] [Google Scholar] - Lu, S., Guo, J., Zimmer-Dauphinee, J. R., Nieusma, J. M., Wang, X., VanValkenburgh, P., ... & Huo, Y. (2025). Vision foundation models in remote sensing: A survey. IEEE Geoscience and Remote Sensing Magazine, 13(3), 190-215.

[CrossRef] [Google Scholar] - Jiang, H., Peng, M., Zhong, Y., Xie, H., Hao, Z., Lin, J., Ma, X., & Hu, X. (2022). A Survey on Deep Learning-Based Change Detection from High-Resolution Remote Sensing Images. Remote Sensing, 14(7), 1552.

[CrossRef] [Google Scholar] - Shafique, A., Cao, G., Khan, Z., Asad, M., & Aslam, M. (2022). Deep Learning-Based Change Detection in Remote Sensing Images: A Review. Remote Sensing, 14(4), 871.

[CrossRef] [Google Scholar] - Ding, L., Hong, D., Zhao, M., Chen, H., Li, C., Deng, J., Yokoya, N., Bruzzone, L., & Chanussot, J. (2025). A Survey of Sample-Efficient Deep Learning for Change Detection in Remote Sensing: Tasks, strategies, and challenges. IEEE Geoscience and Remote Sensing Magazine, 13(3), 164-189.

[CrossRef] [Google Scholar] - Peng, D., Liu, X., Zhang, Y., Guan, H., Li, Y., & Bruzzone, L. (2025). Deep learning change detection techniques for optical remote sensing imagery: Status, perspectives and challenges. International Journal of Applied Earth Observation and Geoinformation, 136, 104282.

[CrossRef] [Google Scholar] - Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). ImageNet Classification with Deep Convolutional Neural Networks. Advances in Neural Information Processing Systems, 25.

[Google Scholar] - He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep Residual Learning for Image Recognition. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 770-778.

[CrossRef] [Google Scholar] - Shelhamer, E., Long, J., & Darrell, T. (2016). Fully Convolutional Networks for Semantic Segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39(4), 640-651.

[CrossRef] [Google Scholar] - Ronneberger, O., Fischer, P., & Brox, T. (2015, October). U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer-assisted intervention (pp. 234-241). Cham: Springer international publishing.

[CrossRef] [Google Scholar] - Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention is all you need. Proceedings of the 31st International Conference on Neural Information Processing Systems, 6000-6010.

[Google Scholar] - Raffel, C., Shazeer, N., Roberts, A., Lee, K., Narang, S., Matena, M., ... & Liu, P. J. (2020). Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research, 21(140), 1-67.

[Google Scholar] - Jacobs, R. A., Jordan, M. I., Nowlan, S. J., & Hinton, G. E. (1991). Adaptive Mixtures of Local Experts. Neural Computation, 3(1), 79-87.

[CrossRef] [Google Scholar] - Jordan, M. I., & Jacobs, R. A. (1994). Hierarchical Mixtures of Experts and the EM Algorithm. Neural Computation, 6(2), 181-214.

[CrossRef] [Google Scholar] - Miller, D. J., & Uyar, H. S. (1997). A mixture of experts classifier with learning based on both labelled and unlabelled data. In 10th Annual Conference on Neural Information Processing Systems, NIPS 1996 (pp. 571-577). Neural information processing systems foundation.

[Google Scholar] - Jiang, W., & Tanner, M. A. (1999, January). Hierarchical mixtures-of-experts for generalized linear models: some results on denseness and consistency. In Seventh International Workshop on Artificial Intelligence and Statistics. PMLR.

[Google Scholar] - Yuksel, S. E., Wilson, J. N., & Gader, P. D. (2012). Twenty Years of Mixture of Experts. IEEE Transactions on Neural Networks and Learning Systems, 23(8), 1177-1193.

[CrossRef] [Google Scholar] - Nguyen, H. D., & Chamroukhi, F. (2018). Practical and theoretical aspects of mixture‐of‐experts modeling: An overview. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery, 8(4), e1246.

[CrossRef] [Google Scholar] - Chamroukhi, F. (2017). Skew t mixture of experts. Neurocomputing, 266, 390-408.

[CrossRef] [Google Scholar] - Fung, T. C., & Tseung, S. C. (2025). Mixture of experts models for multilevel data: Modeling framework and approximation theory. Neurocomputing, 626, 129357.

[CrossRef] [Google Scholar] - Shazeer, N., Mirhoseini, A., Maziarz, K., Davis, A., Le, Q., Hinton, G., & Dean, J. (2017). Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. arXiv preprint arXiv:1701.06538.

[Google Scholar] - Lepikhin, D., Lee, H., Xu, Y., Chen, D., Firat, O., Huang, Y., ... & Chen, Z. (2020). Gshard: Scaling giant models with conditional computation and automatic sharding. arXiv preprint arXiv:2006.16668.

[Google Scholar] - Fedus, W., Zoph, B., & Shazeer, N. (2022). Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity. Journal of Machine Learning Research, 23(120), 1-39. http://jmlr.org/papers/v23/21-0998.html

[Google Scholar] - Du, N., Huang, Y., Dai, A. M., Tong, S., Lepikhin, D., Xu, Y., ... & Cui, C. (2022, June). Glam: Efficient scaling of language models with mixture-of-experts. In International conference on machine learning (pp. 5547-5569). PMLR.

[Google Scholar] - Wang, X., Yu, F., Dunlap, L., Ma, Y. A., Wang, R., Mirhoseini, A., ... & Gonzalez, J. E. (2020, August). Deep mixture of experts via shallow embedding. In Uncertainty in artificial intelligence (pp. 552-562). PMLR.

[Google Scholar] - Fan, Z., Sarkar, R., Jiang, Z., Chen, T., Zou, K., Cheng, Y., ... & Wang, Z. (2022). M³vit: Mixture-of-experts vision transformer for efficient multi-task learning with model-accelerator co-design. Advances in Neural Information Processing Systems, 35, 28441-28457.

[Google Scholar] - Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., ... & Houlsby, N. (2020). An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929.

[Google Scholar] - Chen, Z., Shen, Y., Ding, M., Chen, Z., Zhao, H., Learned-Miller, E., & Gan, C. (2023, June). Mod-Squad: Designing Mixtures of Experts As Modular Multi-Task Learners. In 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (pp. 11828-11837). IEEE Computer Society.

[CrossRef] [Google Scholar] - Pavlitskaya, S., Hubschneider, C., Weber, M., Moritz, R., Hüger, F., Schlicht, P., & Zöllner, J. M. (2020, June). Using Mixture of Expert Models to Gain Insights into Semantic Segmentation. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW) (pp. 1399-1406). IEEE.

[CrossRef] [Google Scholar] - Rajbhandari, S., Li, C., Yao, Z., Zhang, M., Aminabadi, R. Y., Awan, A. A., ... & He, Y. (2022, June). Deepspeed-moe: Advancing mixture-of-experts inference and training to power next-generation ai scale. In International conference on machine learning (pp. 18332-18346). PMLR.

[Google Scholar] - Cai, W., Jiang, J., Wang, F., Tang, J., Kim, S., & Huang, J. (2025). A Survey on Mixture of Experts in Large Language Models. IEEE Transactions on Knowledge & Data Engineering, 37(07), 3896-3915.

[CrossRef] [Google Scholar] - Cai, W., Jiang, J., Wang, F., Tang, J., Kim, S., & Huang, J. (2024). A Survey on Mixture of Experts. arXiv preprint arXiv:2407.06204.

[Google Scholar] - Gan, W., Ning, Z., Qi, Z., & Yu, P. S. (2025). Mixture of experts (moe): A big data perspective. Information Fusion, 103664.

[CrossRef] [Google Scholar] - Dimitri, V., Regina, B., & Alfonz, M. (2025). A Survey on Mixture of Experts: Advancements, Challenges, and Future Directions. Authorea Preprints.

[CrossRef] [Google Scholar] - Dou, P., Shen, H., Li, Z., & Guan, X. (2021). Time series remote sensing image classification framework using combination of deep learning and multiple classifiers system. International Journal of Applied Earth Observation and Geoinformation, 103, 102477.

[CrossRef] [Google Scholar] - Jia, Y., Ge, Y., Ling, F., Guo, X., Wang, J., Wang, L., Chen, Y., & Li, X. (2018). Urban Land Use Mapping by Combining Remote Sensing Imagery and Mobile Phone Positioning Data. Remote Sensing, 10(3), 446.

[CrossRef] [Google Scholar] - Liu, Y., Wu, C., Guan, M., & Wang, J. (2025). MixtureRS: A Mixture of Expert Network Based Remote Sensing Land Classification. Remote Sensing, 17(14), 2494.

[CrossRef] [Google Scholar] - Chen, B., Chen, K., Yang, M., Zou, Z., & Shi, Z. (2025). Heterogeneous Mixture of Experts for Remote Sensing Image Super-Resolution. IEEE Geoscience and Remote Sensing Letters, 22, LGRS-2025.

[CrossRef] [Google Scholar] - Liu, Z., Zhang, J., Wang, W., & Gu, Y. (2025). M 2 CD: A Unified MultiModal Framework for Optical-SAR Change Detection With Mixture of Experts and Self-Distillation. IEEE Geoscience and Remote Sensing Letters, 22, LGRS-2025.

[CrossRef] [Google Scholar] - Lu, Q., Zhao, W., Chen, J., Chen, X., & Zhang, L. (2025). Uncertainty Mixture of Experts Model for Long Tail Crop Type Mapping. Remote Sensing, 17(22), 3752.

[CrossRef] [Google Scholar] - Lin, H., Hong, D., Ge, S., Luo, C., Jiang, K., Jin, H., & Wen, C. (2025). RS-MoE: A Vision–Language Model With Mixture of Experts for Remote Sensing Image Captioning and Visual Question Answering. IEEE Transactions on Geoscience and Remote Sensing, 63, 1-18.

[CrossRef] [Google Scholar] - Liu, X., & Lian, Z. (2024). Rsunivlm: A unified vision language model for remote sensing via granularity-oriented mixture of experts. arXiv preprint arXiv:2412.05679.

[Google Scholar] - Bi, H., Feng, Y., Tong, B., Wang, M., Yu, H., Mao, Y., ... & Sun, X. (2025). RingMoE: Mixture-of-modality-experts multi-modal foundation models for universal remote sensing image interpretation. IEEE Transactions on Pattern Analysis and Machine Intelligence.

[CrossRef] [Google Scholar] - Seydi, S. T., Hasanlou, M., & Chanussot, J. (2024). A novel deep Siamese framework for burned area mapping Leveraging mixture of experts. Engineering Applications of Artificial Intelligence, 133, 108280.

[CrossRef] [Google Scholar] - Masoudnia, S., & Ebrahimpour, R. (2014). Mixture of experts: a literature survey. Artificial Intelligence Review, 42(2), 275-293.

[CrossRef] [Google Scholar] - Ma, J., Zhao, Z., Yi, X., Chen, J., Hong, L., & Chi, E. H. (2018). Modeling Task Relationships in Multi-task Learning with Multi-gate Mixture-of-Experts. Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, 1930-1939.

[CrossRef] [Google Scholar] - Tang, H., Liu, J., Zhao, M., & Gong, X. (2020, September). Progressive layered extraction (ple): A novel multi-task learning (mtl) model for personalized recommendations. In Proceedings of the 14th ACM conference on recommender systems (pp. 269-278).

[CrossRef] [Google Scholar] - Gupta, S., Mukherjee, S., Subudhi, K., Gonzalez, E., Jose, D., Awadallah, A. H., & Gao, J. (2022). Sparsely activated mixture-of-experts are robust multi-task learners. arXiv preprint arXiv:2204.07689.

[Google Scholar] - Kudugunta, S., Huang, Y., Bapna, A., Krikun, M., Lepikhin, D., Luong, M. T., & Firat, O. (2021, November). Beyond distillation: Task-level mixture-of-experts for efficient inference. In Findings of the association for computational linguistics: EMNLP 2021 (pp. 3577-3599).

[CrossRef] [Google Scholar] - Riquelme, C., Puigcerver, J., Mustafa, B., Neumann, M., Jenatton, R., Susano Pinto, A., ... & Houlsby, N. (2021). Scaling vision with sparse mixture of experts. Advances in Neural Information Processing Systems, 34, 8583-8595.

[Google Scholar] - Mustafa, B., Riquelme, C., Puigcerver, J., Jenatton, R., & Houlsby, N. (2022). Multimodal contrastive learning with limoe: the language-image mixture of experts. Advances in Neural Information Processing Systems, 35, 9564-9576.

[Google Scholar] - Zhu, J., Zhu, X., Wang, W., Wang, X., Li, H., Wang, X., & Dai, J. (2022). Uni-perceiver-moe: Learning sparse generalist models with conditional moes. Advances in Neural Information Processing Systems, 35, 2664-2678.

[Google Scholar] - Dai, D., Deng, C., Zhao, C., Xu, R. X., Gao, H., Chen, D., ... & Liang, W. (2024, August). Deepseekmoe: Towards ultimate expert specialization in mixture-of-experts language models. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (pp. 1280-1297).

[CrossRef] [Google Scholar] - Jiang, A. Q., Sablayrolles, A., Roux, A., Mensch, A., Savary, B., Bamford, C., ... & Sayed, W. E. (2024). Mixtral of experts. arXiv preprint arXiv:2401.04088.

[Google Scholar] - Muennighoff, N., Soldaini, L., Groeneveld, D., Lo, K., Morrison, J., Min, S., ... & Hajishirzi, H. (2024). Olmoe: Open mixture-of-experts language models. arXiv preprint arXiv:2409.02060.

[Google Scholar] - He, J., Qiu, J., Zeng, A., Yang, Z., Zhai, J., & Tang, J. (2021). Fastmoe: A fast mixture-of-expert training system. arXiv preprint arXiv:2103.13262.

[Google Scholar] - Hwang, C., Cui, W., Xiong, Y., Yang, Z., Liu, Z., Hu, H., ... & Xiong, Y. (2023). Tutel: Adaptive mixture-of-experts at scale. Proceedings of Machine Learning and Systems, 5, 269-287.

[Google Scholar] - Nie, X., Zhao, P., Miao, X., Zhao, T., & Cui, B. (2022). HetuMoE: An efficient trillion-scale mixture-of-expert distributed training system. arXiv preprint arXiv:2203.14685.

[Google Scholar] - He, J., Zhai, J., Antunes, T., Wang, H., Luo, F., Shi, S., & Li, Q. (2022). FasterMoE: modeling and optimizing training of large-scale dynamic pre-trained models. Proceedings of the 27th ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming, 120-134.

[CrossRef] [Google Scholar] - Gale, T., Narayanan, D., Young, C., & Zaharia, M. (2023). Megablocks: Efficient sparse training with mixture-of-experts. Proceedings of Machine Learning and Systems, 5, 288-304.

[Google Scholar] - Hwang, R., Wei, J., Cao, S., Hwang, C., Tang, X., Cao, T., & Yang, M. (2024, June). Pre-gated moe: An algorithm-system co-design for fast and scalable mixture-of-expert inference. In 2024 ACM/IEEE 51st Annual International Symposium on Computer Architecture (ISCA) (pp. 1018-1031). IEEE.

[CrossRef] [Google Scholar] - Lewis, M., Bhosale, S., Dettmers, T., Goyal, N., & Zettlemoyer, L. (2021, July). Base layers: Simplifying training of large, sparse models. In International Conference on Machine Learning (pp. 6265-6274). PMLR.

[Google Scholar] - Roller, S., Sukhbaatar, S., Szlam, A., & Weston, J. (2021, December). Hash layers for large sparse models. In Proceedings of the 35th International Conference on Neural Information Processing Systems (pp. 17555-17566).

[Google Scholar] - Komatsuzaki, A., Puigcerver, J., Lee-Thorp, J., Ruiz, C. R., Mustafa, B., Ainslie, J., ... & Houlsby, N. (2022). Sparse upcycling: Training mixture-of-experts from dense checkpoints. arXiv preprint arXiv:2212.05055.

[Google Scholar] - Chen, Z., Deng, Y., Wu, Y., Gu, Q., & Li, Y. (2022). Towards Understanding the Mixture-of-Experts Layer in Deep Learning. Advances in Neural Information Processing Systems, 35, 23049-23062.

[Google Scholar] - Ho, N., Yang, C.-Y., & Jordan, M. I. (2022). Convergence Rates for Gaussian Mixtures of Experts. Journal of Machine Learning Research, 23(323), 1-81.

[Google Scholar] - Nguyen, H., Nguyen, T., & Ho, N. (2023). Demystifying Softmax Gating Function in Gaussian Mixture of Experts. Advances in Neural Information Processing Systems, 36, 4624-4652.

[Google Scholar] - Nguyen, H., Akbarian, P., Yan, F., & Ho, N. (2023). Statistical perspective of top-k sparse softmax gating mixture of experts. arXiv preprint arXiv:2309.13850.

[Google Scholar] - Nguyen, H., Akbarian, P., Nguyen, T., & Ho, N. (2023). A general theory for softmax gating multinomial logistic mixture of experts. arXiv preprint arXiv:2310.14188.

[Google Scholar] - Shi, X., Wang, S., Nie, Y., Li, D., Ye, Z., Wen, Q., & Jin, M. (2024). Time-moe: Billion-scale time series foundation models with mixture of experts. arXiv preprint arXiv:2409.16040.

[Google Scholar] - Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., ... & Chen, W. (2022). Lora: Low-rank adaptation of large language models. Iclr, 1(2), 3.

[Google Scholar] - Kunwar, P., Vu, M. N., Gupta, M., Abdelsalam, M., & Bhattarai, M. (2025, November). TT-LoRA MoE: Using Parameter-Efficient Fine-Tuning and Sparse Mixture-Of-Experts. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis (pp. 1332-1350).

[CrossRef] [Google Scholar] - Wu, X., Huang, S., & Wei, F. (2024). Mixture of lora experts. arXiv preprint arXiv:2404.13628.

[Google Scholar] - Liao, M., Chen, W., Shen, J., Guo, S., & Wan, H. (2025, April). Hmora: Making llms more effective with hierarchical mixture of lora experts. In The Thirteenth International Conference on Learning Representations.

[Google Scholar] - Zoph, B., Bello, I., Kumar, S., Du, N., Huang, Y., Dean, J., ... & Fedus, W. (2022). St-moe: Designing stable and transferable sparse expert models. arXiv preprint arXiv:2202.08906.

[Google Scholar] - Zhou, Y., Lei, T., Liu, H., Du, N., Huang, Y., Zhao, V., ... & Laudon, J. (2022). Mixture-of-experts with expert choice routing. Advances in Neural Information Processing Systems, 35, 7103-7114.

[Google Scholar] - Huang, Q., An, Z., Zhuang, N., Tao, M., Zhang, C., Jin, Y., ... & Feng, Y. (2024, August). Harder task needs more experts: Dynamic routing in MoE models. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (pp. 12883-12895).

[CrossRef] [Google Scholar] - Hazimeh, H., Zhao, Z., Chowdhery, A., Sathiamoorthy, M., Chen, Y., Mazumder, R., Hong, L., & Chi, E. (2021). DSelect-k: Differentiable Selection in the Mixture of Experts with Applications to Multi-Task Learning. Advances in Neural Information Processing Systems, 34, 29335-29347.

[Google Scholar] - Zhong, Z., Xia, M., Chen, D., & Lewis, M. (2024). Lory: Fully differentiable mixture-of-experts for autoregressive language model pre-training. arXiv preprint arXiv:2405.03133.

[Google Scholar] - Zuo, S., Liu, X., Jiao, J., Kim, Y. J., Hassan, H., Zhang, R., ... & Gao, J. (2021). Taming sparsely activated transformer with stochastic experts. arXiv preprint arXiv:2110.04260.

[Google Scholar] - Wang, L., Gao, H., Zhao, C., Sun, X., & Dai, D. (2024). Auxiliary-loss-free load balancing strategy for mixture-of-experts. arXiv preprint arXiv:2408.15664.

[Google Scholar] - Thaman, K. (2025). One Must Imagine Experts Happy: Rebalancing Neural Routers via Constrained Optimization. Sparsity in LLMs (SLLM): Deep Dive into Mixture of Experts, Quantization, Hardware, and Inference.

[Google Scholar] - Omi, N., Sen, S., & Farhadi, A. (2025). Load balancing mixture of experts with similarity preserving routers. arXiv preprint arXiv:2506.14038.

[Google Scholar] - Do, G., Le, H., & Tran, T. (2025). SimSMoE: Toward Efficient Training Mixture of Experts via Solving Representational Collapse. Findings of the Association for Computational Linguistics: NAACL 2025, 2012-2025.

[CrossRef] [Google Scholar] - Chen, T., Zhang, Z., Jaiswal, A., Liu, S., & Wang, Z. (2023). Sparse moe as the new dropout: Scaling dense and self-slimmable transformers. arXiv preprint arXiv:2303.01610.

[Google Scholar] - Xie, Y., Huang, S., Chen, T., & Wei, F. (2023, June). Moec: Mixture of expert clusters. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 37, No. 11, pp. 13807-13815).

[CrossRef] [Google Scholar] - Zuo, S., Zhang, Q., Liang, C., He, P., Zhao, T., & Chen, W. (2022). MoEBERT: from BERT to Mixture-of-Experts via Importance-Guided Adaptation. Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 1610-1623.

[CrossRef] [Google Scholar] - Szatkowski, F., Wójcik, B., Piórczyński, M., & Scardapane, S. (2024). Exploiting activation sparsity with dense to dynamic-k mixture-of-experts conversion. Advances in Neural Information Processing Systems, 37, 43245-43273.

[Google Scholar] - Gao, S., Hua, T., Shirkavand, R., Lin, C. H., Tang, Z., Li, Z., ... & Hsu, Y. C. (2025). ToMoE: Converting Dense Large Language Models to Mixture-of-Experts through Dynamic Structural Pruning. arXiv preprint arXiv:2501.15316.

[Google Scholar] - Nussbaum, Z., & Duderstadt, B. (2025). Training sparse mixture of experts text embedding models. arXiv preprint arXiv:2502.07972.

[Google Scholar] - Gu, N., Zhang, Z., Feng, Y., Chen, Y., Fu, P., Lin, Z., ... & Wang, H. (2025). Elastic MoE: Unlocking the Inference-Time Scalability of Mixture-of-Experts. arXiv preprint arXiv:2509.21892.

[Google Scholar] - Ma, W., Zhang, H., Zhao, L., Song, Y., Wang, Y., Sui, Z., & Luo, F. (2025). Stabilizing moe reinforcement learning by aligning training and inference routers. arXiv preprint arXiv:2510.11370.

[Google Scholar] - Gururangan, S., Li, M., Lewis, M., Shi, W., Althoff, T., Smith, N. A., & Zettlemoyer, L. (2023). Scaling expert language models with unsupervised domain discovery. arXiv preprint arXiv:2303.14177.

[Google Scholar] - Jawahar, G., Mukherjee, S., Liu, X., Kim, Y. J., Abdul-Mageed, M., Lakshmanan, L., ... & Gao, J. (2023, July). Automoe: Heterogeneous mixture-of-experts with adaptive computation for efficient neural machine translation. In Findings of the Association for Computational Linguistics: ACL 2023 (pp. 9116-9132).

[CrossRef] [Google Scholar] - Devlin, J., Chang, M. W., Lee, K., & Toutanova, K. (2019, June). Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, volume 1 (long and short papers) (pp. 4171-4186).

[CrossRef] [Google Scholar] - Kussul, N., Shelestov, A., Lavreniuk, M., Butko, I., & Skakun, S. (2016, July). Deep learning approach for large scale land cover mapping based on remote sensing data fusion. In 2016 IEEE international geoscience and remote sensing symposium (IGARSS) (pp. 198-201). IEEE.

[CrossRef] [Google Scholar] - Yuksel, S. E., & Gader, P. D. (2012, July). Mixture of hmm experts with applications to landmine detection. In 2012 IEEE International Geoscience and Remote Sensing Symposium (pp. 6852-6855). IEEE.

[CrossRef] [Google Scholar] - Nagarajan, K., & Slatton, K. C. (2009). Multiscale Segmentation of Elevation Images Using a Mixture-of-Experts Framework. IEEE Geoscience and Remote Sensing Letters, 6(4), 865-869.

[CrossRef] [Google Scholar] - Chen, Y., Cui, H., Zhang, G., Li, X., Xie, Z., Li, H., & Li, D. (2024). SparseFormer: A credible Dual-CNN Expert-Guided transformer for remote sensing image segmentation with sparse point annotation. IEEE Transactions on Geoscience and Remote Sensing, 63, 1-16.

[CrossRef] [Google Scholar] - Sun, Z., Liu, J., Zhang, W., Liu, F., Yang, J., & Xiao, L. (2025, April). Multi-scale Feature Interaction and Adaptive Experts for Panoptic Segmentation in Remote Sensing Images. In ICASSP 2025-2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) (pp. 1-5). IEEE.

[CrossRef] [Google Scholar] - He, S., Cheng, Q., Huai, Y., Zhu, Z., & Ding, J. (2024, July). Mixture-of-experts for semantic segmentation of remoting sensing image. In International Conference on Image Processing and Artificial Intelligence (ICIPAl 2024) (Vol. 13213, pp. 478-483). SPIE.

[CrossRef] [Google Scholar] - Ren, J., Zai, K., Li, H., Wang, H., Du, J., Mu, W., & Qin, F. (2025). A Mixture of Experts Model for Image Classification Based on High-Resolution Remote Sensing Image. 2025 32nd International Conference on Geoinformatics, 1-7.

[CrossRef] [Google Scholar] - Chen, X., Yan, S., Zhu, J., Chen, C., Liu, Y., & Zhang, M. (2025). Generalizable multispectral land cover classification via frequency-aware mixture of low-rank token experts. arXiv preprint arXiv:2505.14088.

[Google Scholar] - Lee, S., Park, S., Yang, J., Kim, J., & Cha, M. (2025). Generalizable Slum Detection from Satellite Imagery with Mixture-of-Experts. arXiv preprint arXiv:2511.10300.

[Google Scholar] - Xu, H., Xue, B., Liu, R., Zhang, Q., & Lu, W. (2025, June). Multi-Scale Mixture-of-Experts With Lora for Building Extraction from Optical Remote-Sensing Images. In 2025 32nd International Conference on Geoinformatics (pp. 1-9). IEEE.

[CrossRef] [Google Scholar] - Li, R., Ding, X., Peng, S., & Cai, F. (2025). U-MoEMamba: A Hybrid Expert Segmentation Model for Cabbage Heads in Complex UAV Low-Altitude Remote Sensing Scenarios. Agriculture, 15(16), 1723.

[CrossRef] [Google Scholar] - Xie, J., Yu, F., & Wang, H. (2022, April). Stacked Mixture-of-Expert Networks for Fast Aerial Scene Classification. In 2022 3rd International Conference on Geology, Mapping and Remote Sensing (ICGMRS) (pp. 121-126). IEEE.

[CrossRef] [Google Scholar] - Mensah, E. A., Lee, A., Zhang, H., Shan, Y., & Heimerl, K. (2024). Towards vision mixture of experts for wildlife monitoring on the edge. arXiv preprint arXiv:2411.07834.

[Google Scholar] - Wang, Y., Zhang, F., Zhao, Q., Hu, W., & Ma, F. (2025). DMRS: Long-tailed remote sensing recognition via semantic-aware mixing and diversity experts. International Journal of Applied Earth Observation and Geoinformation, 141, 104623.

[CrossRef] [Google Scholar] - Fu, Y., Yang, R., Liu, Z., & Ng, M. K. (2025). Adaptive Mixture-of-Experts Distillation for Cross-Satellite Generalizable Incremental Remote Sensing Scene Classification. IEEE Transactions on Circuits and Systems for Video Technology, 36(1), 233-247.

[CrossRef] [Google Scholar] - Guo, S., Chen, T., Wang, P., Yan, J., & Liu, H. (2025). Confidence Fusion With Representation Distribution and Mixture of Experts for Multimodal Radar Target Recognition. IEEE Transactions on Aerospace and Electronic Systems, 61(5), 13251-13268.

[CrossRef] [Google Scholar] - Xu, Y., Wang, D., Jiao, H., Zhang, L., & Zhang, L. (2025). MambaMoE: Mixture-of-spectral-spatial-experts state space model for hyperspectral image classification. Information Fusion, 103811.

[CrossRef] [Google Scholar] - Dai, X., Li, Z., Li, L., Xue, S., Huang, X., & Yang, X. (2025). HyperTransXNet: learning both global and local dynamics with a dual dynamic token mixer for hyperspectral image classification. Remote Sensing, 17(14), 2361.

[CrossRef] [Google Scholar] - Gao, Q., Qu, J., Li, Y., & Dong, W. (2025). Rethinking Efficient Mixture-of-Experts for Remote Sensing Modality-Missing Classification. arXiv preprint arXiv:2511.11460.

[Google Scholar] - Chai, B., Zhou, Q., Nie, X., Qiao, Q., Wu, W., Shi, Y., & Li, X. (2025). Scalable Mixture-of-Experts Attention Feature Pyramid Network for Detection and Segmentation.

[CrossRef] [Google Scholar] - Chen, Y., Jiang, W., & Wang, Y. (2025). FAMHE-Net: Multi-Scale Feature Augmentation and Mixture of Heterogeneous Experts for Oriented Object Detection. Remote Sensing, 17(2), 205.

[CrossRef] [Google Scholar] - Lin, Q., Huang, H., Zhu, D., Chen, N., Fu, G., & Yu, Y. (2025). Multiple Region Proposal Experts Network for Wide-Scale Remote Sensing Object Detection. IEEE Transactions on Geoscience and Remote Sensing, 63, 1-16.

[CrossRef] [Google Scholar] - Li, Y., Li, X., Li, Y., Zhang, Y., Dai, Y., Hou, Q., ... & Yang, J. (2026, March). Sm3det: A unified model for multi-modal remote sensing object detection. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 40, No. 8, pp. 6717-6725).

[CrossRef] [Google Scholar] - Lin, Q., Zhao, J., Du, B., Fu, G., & Yuan, Z. (2021). MEDNet: Multiexpert detection network with unsupervised clustering of training samples. IEEE Transactions on Geoscience and Remote Sensing, 60, 1-14.

[CrossRef] [Google Scholar] - Zhang, Z., Zhao, E., Jiang, Y., Jie, N., & Liang, X. (2025, June). Challenging Dataset and Multi-Modal Gated Mixture of Experts Model for Remote Sensing Copy-Move Forgery Understanding. In 2025 IEEE International Conference on Multimedia and Expo (ICME) (pp. 1-6). IEEE.

[CrossRef] [Google Scholar] - Qian, P., Wang, J., Liu, Y., Chen, Y., Wang, P., Deng, Y., Xiao, P., & Li, Z. (2025). Multi-Task Mixture-of-Experts Model for Underwater Target Localization and Recognition. Remote Sensing, 17(17), 2961.

[CrossRef] [Google Scholar] - Jiang, C., Osei, K., Yeddula, S. D., Feng, D., & Ku, W.-S. (2025). Knowledge-Guided Adaptive Mixture of Experts for Precipitation Prediction. arXiv preprint arXiv:2509.11459.

[Google Scholar] - Sun, W., Tan, Y., Li, J., Hou, S., Li, X., Shao, Y., Wang, Z., & Song, B. (2025). HotMoE: Exploring Sparse Mixture-of-Experts for Hyperspectral Object Tracking. IEEE Transactions on Multimedia, 27, 4072-4083.

[CrossRef] [Google Scholar] - Albughdadi, M. (2025). Lightweight Metadata-Aware Mixture-of-Experts Masked Autoencoder for Earth Observation. arXiv preprint arXiv:2509.10919.

[Google Scholar] - Li, J., Kang, J., Lu, J., Fu, H., Li, Z., Liu, B., ... & Liu, Z. (2025). Dynamic gating-enhanced deep learning model with multi-source remote sensing synergy for optimizing wheat yield estimation. Frontiers in Plant Science, 16, 1640806.

[CrossRef] [Google Scholar] - Zhang, Y., Ru, L., Wu, K., Yu, L., Liang, L., Li, Y., & Chen, J. (2025). SkySense V2: A unified foundation model for multi-modal remote sensing. Proceedings of the IEEE/CVF International Conference on Computer Vision, 9136-9146.

[Google Scholar] - Hanna, J., Scheibenreif, L., & Borth, D. (2026). MAPEX: Modality-aware pruning of experts for remote sensing foundation models. IEEE Transactions on Geoscience and Remote Sensing, 64, 1-11.

[CrossRef] [Google Scholar] - Liu, G., He, J., Li, P., Zhong, S., Li, H., & He, G. (2023). Unified transformer with cross-modal mixture experts for remote-sensing visual question answering. Remote Sensing, 15(19), 4682.

[CrossRef] [Google Scholar] - Liu, J., Fu, R., Sun, L., Liu, H., Yang, X., Zhang, W., ... & Yang, B. (2026, March). Skymoe: A vision-language foundation model for enhancing geospatial interpretation with mixture of experts. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 40, No. 9, pp. 7168-7178).

[CrossRef] [Google Scholar] - Ngo, B. H., Kim, J. H., Park, S. J., & Cho, S. I. (2022). Collaboration between multiple experts for knowledge adaptation on multiple remote sensing sources. IEEE Transactions on Geoscience and Remote Sensing, 60, 1-15.

[CrossRef] [Google Scholar] - Zhang, Y., Li, W., Zhang, M., Han, J., Tao, R., & Liang, S. (2025). SpectralX: Parameter-efficient Domain Generalization for Spectral Remote Sensing Foundation Models. arXiv preprint arXiv:2508.01731.

[Google Scholar] - Pasika, H., Haykin, S., Clothiaux, E., & Stewart, R. (1999). Neural networks for sensor fusion in remote sensing. IJCNN'99. International Joint Conference on Neural Networks. Proceedings, 4, 2772-2776.

[CrossRef] [Google Scholar] - Aggarwal, V., Nagarajan, K., & Slatton, K. C. (2004). Multiple-model multiscale data fusion regulated by a mixture-of-experts network. IGARSS 2004. 2004 IEEE International Geoscience and Remote Sensing Symposium, 1.

[CrossRef] [Google Scholar] - Kong, Y., Yu, S., Cheng, Y., Philip Chen, C. L., & Wang, X. (2025). Joint Classification of Hyperspectral Images and LiDAR Data Based on Candidate Pseudo Labels Pruning and Dual Mixture of Experts. IEEE Transactions on Geoscience and Remote Sensing, 63, 1-12.

[CrossRef] [Google Scholar] - He, W., Cai, Y., Ren, Q., Ruze, A., & Jia, S. (2025). Adaptive Expert Learning for Hyperspectral and Multispectral Image Fusion. IEEE Transactions on Geoscience and Remote Sensing, 63, 1-15.

[CrossRef] [Google Scholar] - Rossi, L., Bernuzzi, V., Fontanini, T., Bertozzi, M., & Prati, A. (2025). Swin2-MoSE: A new single image supersolution model for remote sensing. IET Image Processing, 19(1), e13303.

[CrossRef] [Google Scholar] - Dong, Z., Zhang, Z., Sun, Y., Jiang, H., Liu, T., & Gu, Y. (2026). PhyDAE: Physics-Guided Degradation-Adaptive Experts for All-in-One Remote Sensing Image Restoration. IEEE Transactions on Geoscience and Remote Sensing.

[CrossRef] [Google Scholar] - Shen, H., Ding, H., Zhang, Y., Cong, X., Zhao, Z.-Q., & Jiang, X. (2024). Spatial-Frequency Adaptive Remote Sensing Image Dehazing With Mixture of Experts. IEEE Transactions on Geoscience and Remote Sensing, 62, 1-14.

[CrossRef] [Google Scholar] - He, X., Yan, K., Li, R., Xie, C., Zhang, J., & Zhou, M. (2024, March). Frequency-adaptive pan-sharpening with mixture of experts. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 38, No. 3, pp. 2121-2129).

[CrossRef] [Google Scholar] - Loyola R., D. G. (2006). Applications of neural network methods to the processing of earth observation satellite data. Neural Networks, 19(2), 168-177.

[CrossRef] [Google Scholar] - Li, Z., Chen, X., Li, J., & Zhang, J. (2022). Pertinent Multigate Mixture-of-Experts-Based Prestack Three-Parameter Seismic Inversion. IEEE Transactions on Geoscience and Remote Sensing, 60, 1-15.

[CrossRef] [Google Scholar] - Wang, W., Liu, B., Gao, S., Li, J., Zhou, Y., Zhang, S., & Ding, Z. (2025). PhA-MOE: Enhancing Hyperspectral Retrievals for Phytoplankton Absorption Using Mixture-of-Experts. Remote Sensing, 17(12), 2103.

[CrossRef] [Google Scholar] - Roberts, D. R., Bahn, V., Ciuti, S., Boyce, M. S., Elith, J., Guillera‐Arroita, G., ... & Dormann, C. F. (2017). Cross‐validation strategies for data with temporal, spatial, hierarchical, or phylogenetic structure. Ecography, 40(8), 913-929.

[CrossRef] [Google Scholar] - Valavi, R., Elith, J., Lahoz-Monfort, J. J., & Guillera-Arroita, G. (2018). blockCV: An r package for generating spatially or environmentally separated folds for k-fold cross-validation of species distribution models. Biorxiv, 357798.

[CrossRef] [Google Scholar] - Demšar, J. (2006). Statistical comparisons of classifiers over multiple data sets. Journal of Machine learning research, 7(Jan), 1-30.

[Google Scholar] - Dror, R., Baumer, G., Shlomov, S., & Reichart, R. (2018). The Hitchhiker's Guide to Testing Statistical Significance in Natural Language Processing. Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 1383-1392.

[CrossRef] [Google Scholar] - Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., & Batra, D. (2017). Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. 2017 IEEE International Conference on Computer Vision (ICCV), 618-626.

[CrossRef] [Google Scholar] - Sundararajan, M., Taly, A., & Yan, Q. (2017). Axiomatic attribution for deep networks. Proceedings of the 34th International Conference on Machine Learning - Volume 70, 3319-3328.

[Google Scholar] - Ribeiro, M. T., Singh, S., & Guestrin, C. (2016). Why Should I Trust You?: Explaining the Predictions of Any Classifier. Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 1135-1144.

[CrossRef] [Google Scholar] - Guo, C., Pleiss, G., Sun, Y., & Weinberger, K. Q. (2017). On calibration of modern neural networks. Proceedings of the 34th International Conference on Machine Learning - Volume 70, 1321-1330.

[Google Scholar] - Ovadia, Y., Fertig, E., Ren, J., Nado, Z., Sculley, D., Nowozin, S., ... & Snoek, J. (2019). Can you trust your model's uncertainty? evaluating predictive uncertainty under dataset shift. Advances in neural information processing systems, 32.

[Google Scholar] - Zhao, Q., Jiang, C., Hu, W., Zhang, F., & Liu, J. (2023). MDCS: More Diverse Experts with Consistency Self-distillation for Long-tailed Recognition. 2023 IEEE/CVF International Conference on Computer Vision (ICCV), 11563-11574.

[CrossRef] [Google Scholar] - Li, J., Li, D., Savarese, S., & Hoi, S. (2023, July). Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. In International conference on machine learning (pp. 19730-19742). PMLR.

[Google Scholar] - Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735-1780.

[CrossRef] [Google Scholar] - Gu, A., & Dao, T. (2024, May). Mamba: Linear-time sequence modeling with selective state spaces. In First conference on language modeling.

[Google Scholar] - Dao, T., & Gu, A. (2024, July). Transformers are SSMs: generalized models and efficient algorithms through structured state space duality. In Proceedings of the 41st International Conference on Machine Learning (pp. 10041-10071).

[Google Scholar] - Mehta, S., & Rastegari, M. (2022). Separable self-attention for mobile vision transformers. arXiv preprint arXiv:2206.02680.

[Google Scholar] - Cheng, G., Han, J., & Lu, X. (2017). Remote Sensing Image Scene Classification: Benchmark and State of the Art. Proceedings of the IEEE, 105(10), 1865-1883.

[CrossRef] [Google Scholar]

Cite This Article

TY - JOUR AU - Cui, Yongchuan AU - Liu, Peng AU - Chen, Lajiao PY - 2026 DA - 2026/03/23 TI - Mixture-of-Experts in Remote Sensing: A Survey JO - Journal of Geoscience and Remote Sensing T2 - Journal of Geoscience and Remote Sensing JF - Journal of Geoscience and Remote Sensing VL - 1 IS - 1 SP - 4 EP - 38 DO - 10.62762/JGRS.2025.140654 UR - https://www.icck.org/article/abs/JGRS.2025.140654 KW - Mixture-of-Experts KW - remote sensing KW - image classification KW - vision-language models KW - object detection KW - change detection KW - multi-modal fusion KW - super-resolution AB - Remote sensing data analysis and interpretation present unique challenges due to the diversity in sensor modalities and spatiotemporal dynamics of Earth observation data. Mixture-of-Experts (MoE) model has emerged as a powerful paradigm that addresses these challenges by dynamically routing inputs to specialized experts designed for different aspects of a task. However, despite rapid progress, the community still lacks a comprehensive review of MoE for remote sensing. This survey provides the first systematic overview of MoE applications in remote sensing, covering fundamental principles, architectural designs, and key applications across a variety of remote sensing tasks. The survey also outlines future trends to inspire further research and innovation in applying MoE to remote sensing. SN - pending PB - Institute of Central Computation and Knowledge LA - English ER -

@article{Cui2026MixtureofE,

author = {Yongchuan Cui and Peng Liu and Lajiao Chen},

title = {Mixture-of-Experts in Remote Sensing: A Survey},

journal = {Journal of Geoscience and Remote Sensing},

year = {2026},

volume = {1},

number = {1},

pages = {4-38},

doi = {10.62762/JGRS.2025.140654},

url = {https://www.icck.org/article/abs/JGRS.2025.140654},

abstract = {Remote sensing data analysis and interpretation present unique challenges due to the diversity in sensor modalities and spatiotemporal dynamics of Earth observation data. Mixture-of-Experts (MoE) model has emerged as a powerful paradigm that addresses these challenges by dynamically routing inputs to specialized experts designed for different aspects of a task. However, despite rapid progress, the community still lacks a comprehensive review of MoE for remote sensing. This survey provides the first systematic overview of MoE applications in remote sensing, covering fundamental principles, architectural designs, and key applications across a variety of remote sensing tasks. The survey also outlines future trends to inspire further research and innovation in applying MoE to remote sensing.},

keywords = {Mixture-of-Experts, remote sensing, image classification, vision-language models, object detection, change detection, multi-modal fusion, super-resolution},

issn = {pending},

publisher = {Institute of Central Computation and Knowledge}

}

Article Metrics

Publisher's Note

ICCK stays neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and Permissions

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Portico