ICCK Transactions on Intelligent Systematics

ISSN: 3068-5079 (Online) | ISSN: 3069-003X (Print)

Email: [email protected]

Submit Manuscript

Edit a Special Issue

Submit Manuscript

Edit a Special Issue

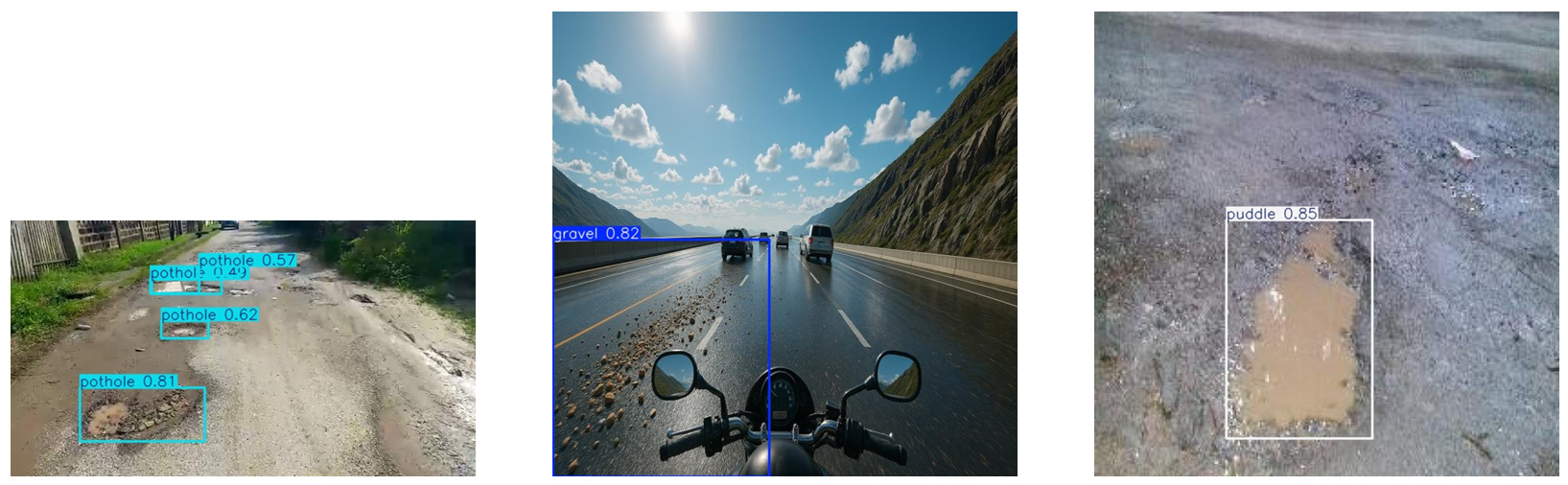

TY - JOUR AU - Silva, Tiago AU - Silva, João AU - Sousa, António AU - Filipe, Vítor PY - 2026 DA - 2026/03/03 TI - Real-Time Detection of Road Anomalies for Integration in Rider Assistance Systems JO - ICCK Transactions on Intelligent Systematics T2 - ICCK Transactions on Intelligent Systematics JF - ICCK Transactions on Intelligent Systematics VL - 3 IS - 1 SP - 32 EP - 54 DO - 10.62762/TIS.2025.418469 UR - https://www.icck.org/article/abs/TIS.2025.418469 KW - road monitoring KW - computer vision KW - object detection KW - edge devices KW - advanced rider assistance systems AB - Road safety has become an increasingly important concern and the integration of Advanced Rider Assistance Systems and Advanced Driver Assistance Systems plays a crucial role in preventing accidents. This work proposes a computer vision pipeline to automatically detect hazardous road anomalies—loose gravel, potholes, and puddles—from a motorcycle-mounted camera, targeting real-time operation on embedded edge devices. A hybrid dataset of 28764 annotated images was created by combining real-world photos, Blender-rendered synthetic scenes, and AI-generated images to improve diversity and coverage. Multiple state-of-the-art object detectors were trained and benchmarked, including the YOLOv5/7/11/12 families and the transformer-based RT-DETR architecture. While the RT-DETR model achieved the highest precision overall, its computational complexity and heavy resource requirements limited its suitability for real-time deployment on low-cost embedded platforms. Conversely, the YOLOv11n model demonstrated the best accuracy–efficiency trade-off, reaching [email protected] = 0.872 at 320$\times$320 with 0.045 s/frame on a Jetson Nano, while lighter variants remained viable on Raspberry Pi boards. Across classes, gravel was the most reliably detected, and operating points around a confidence threshold of $\tau \approx 0.31$ yielded balanced F1 scores up to 0.82. Although results show that automatic road-condition monitoring on affordable hardware is feasible, the prototype has not yet undergone on-road field trials. It does not include an integrated rider alert module or energy-use assessment. These gaps define the immediate roadmap for deployment. SN - 3068-5079 PB - Institute of Central Computation and Knowledge LA - English ER -

@article{Silva2026RealTime,

author = {Tiago Silva and João Silva and António Sousa and Vítor Filipe},

title = {Real-Time Detection of Road Anomalies for Integration in Rider Assistance Systems},

journal = {ICCK Transactions on Intelligent Systematics},

year = {2026},

volume = {3},

number = {1},

pages = {32-54},

doi = {10.62762/TIS.2025.418469},

url = {https://www.icck.org/article/abs/TIS.2025.418469},

abstract = {Road safety has become an increasingly important concern and the integration of Advanced Rider Assistance Systems and Advanced Driver Assistance Systems plays a crucial role in preventing accidents. This work proposes a computer vision pipeline to automatically detect hazardous road anomalies—loose gravel, potholes, and puddles—from a motorcycle-mounted camera, targeting real-time operation on embedded edge devices. A hybrid dataset of 28764 annotated images was created by combining real-world photos, Blender-rendered synthetic scenes, and AI-generated images to improve diversity and coverage. Multiple state-of-the-art object detectors were trained and benchmarked, including the YOLOv5/7/11/12 families and the transformer-based RT-DETR architecture. While the RT-DETR model achieved the highest precision overall, its computational complexity and heavy resource requirements limited its suitability for real-time deployment on low-cost embedded platforms. Conversely, the YOLOv11n model demonstrated the best accuracy–efficiency trade-off, reaching [email protected] = 0.872 at 320\$\times\$320 with 0.045 s/frame on a Jetson Nano, while lighter variants remained viable on Raspberry Pi boards. Across classes, gravel was the most reliably detected, and operating points around a confidence threshold of \$\tau \approx 0.31\$ yielded balanced F1 scores up to 0.82. Although results show that automatic road-condition monitoring on affordable hardware is feasible, the prototype has not yet undergone on-road field trials. It does not include an integrated rider alert module or energy-use assessment. These gaps define the immediate roadmap for deployment.},

keywords = {road monitoring, computer vision, object detection, edge devices, advanced rider assistance systems},

issn = {3068-5079},

publisher = {Institute of Central Computation and Knowledge}

}

ICCK Transactions on Intelligent Systematics

ISSN: 3068-5079 (Online) | ISSN: 3069-003X (Print)

Email: [email protected]

Portico

All published articles are preserved here permanently:

https://www.portico.org/publishers/icck/