Frontiers in Biomedical Signal Processing

ISSN: request pending (Online) | ISSN: request pending (Print)

Email: [email protected]

Submit Manuscript

Edit a Special Issue

Submit Manuscript

Edit a Special Issue

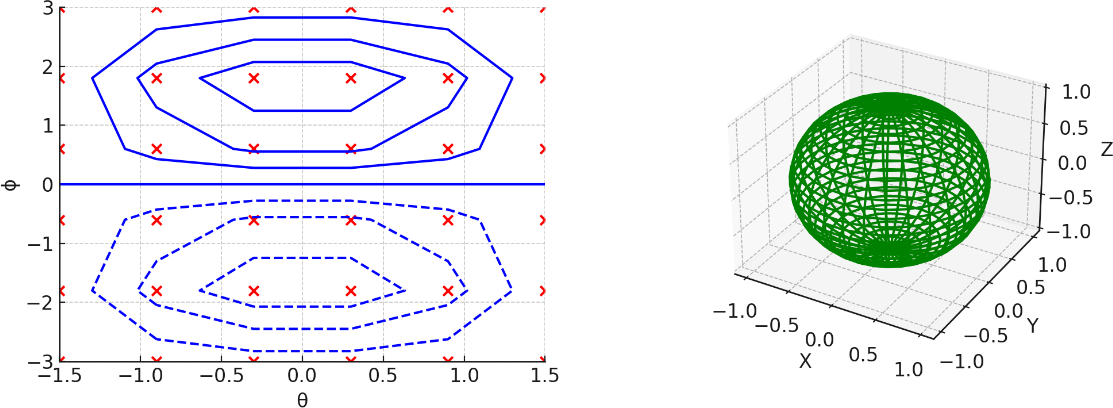

TY - JOUR AU - Sadegh-Zadeh, Seyed-Ali AU - Sadeghzadeh, Nasrin AU - Ghidary, Saeed Shiry AU - Movahedi, Sobhan AU - Kavianpour, Kaveh AU - Barati, Mohammad Amin AU - Mamalo, Alireza Soleimani PY - 2026 DA - 2026/03/02 TI - Adaptive Manifold Concept with Regularized Autoencoders (AMRAE) for Effective Dimensionality Reduction JO - Frontiers in Biomedical Signal Processing T2 - Frontiers in Biomedical Signal Processing JF - Frontiers in Biomedical Signal Processing VL - 1 IS - 2 SP - 79 EP - 104 DO - 10.62762/FBSP.2025.800185 UR - https://www.icck.org/article/abs/FBSP.2025.800185 KW - dimensionality reduction KW - manifold concept regularized autoencoders KW - machine learning KW - high-dimensional data AB - The Adaptive Manifold Concept with Regularized Autoencoders (AMRAE) algorithm is introduced as a novel dimensionality reduction technique that integrates manifold learning with autoencoders to capture the intrinsic geometry of high-dimensional data effectively. The study evaluates the impact of various adjustments and enhancements within the "Manifold Adjustment Box," including different regularization techniques, activation functions, and architectural choices, across diverse datasets. Key findings demonstrate that configurations such as Leaky ReLU Activation and Batch Norm Layer consistently improve accuracy, results highlight the flexibility and robustness of AMRAE. The results underscore the significance of AMRAE in addressing the limitations of traditional dimensionality reduction methods, with potential applications in various fields requiring high-dimensional data analysis. This paper provides a comprehensive summary of the objectives, methodology, results, and conclusions, showcasing AMRAE's efficacy in improving data representation and machine learning performance. SN - request pending PB - Institute of Central Computation and Knowledge LA - English ER -

@article{SadeghZadeh2026Adaptive,

author = {Seyed-Ali Sadegh-Zadeh and Nasrin Sadeghzadeh and Saeed Shiry Ghidary and Sobhan Movahedi and Kaveh Kavianpour and Mohammad Amin Barati and Alireza Soleimani Mamalo},

title = {Adaptive Manifold Concept with Regularized Autoencoders (AMRAE) for Effective Dimensionality Reduction},

journal = {Frontiers in Biomedical Signal Processing},

year = {2026},

volume = {1},

number = {2},

pages = {79-104},

doi = {10.62762/FBSP.2025.800185},

url = {https://www.icck.org/article/abs/FBSP.2025.800185},

abstract = {The Adaptive Manifold Concept with Regularized Autoencoders (AMRAE) algorithm is introduced as a novel dimensionality reduction technique that integrates manifold learning with autoencoders to capture the intrinsic geometry of high-dimensional data effectively. The study evaluates the impact of various adjustments and enhancements within the "Manifold Adjustment Box," including different regularization techniques, activation functions, and architectural choices, across diverse datasets. Key findings demonstrate that configurations such as Leaky ReLU Activation and Batch Norm Layer consistently improve accuracy, results highlight the flexibility and robustness of AMRAE. The results underscore the significance of AMRAE in addressing the limitations of traditional dimensionality reduction methods, with potential applications in various fields requiring high-dimensional data analysis. This paper provides a comprehensive summary of the objectives, methodology, results, and conclusions, showcasing AMRAE's efficacy in improving data representation and machine learning performance.},

keywords = {dimensionality reduction, manifold concept regularized autoencoders, machine learning, high-dimensional data},

issn = {request pending},

publisher = {Institute of Central Computation and Knowledge}

}

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. Frontiers in Biomedical Signal Processing

ISSN: request pending (Online) | ISSN: request pending (Print)

Email: [email protected]

Portico

All published articles are preserved here permanently:

https://www.portico.org/publishers/icck/