ICCK Transactions on Intelligent Systematics

ISSN: 3068-5079 (Online) | ISSN: 3069-003X (Print)

Email: [email protected]

Submit Manuscript

Edit a Special Issue

Submit Manuscript

Edit a Special Issue

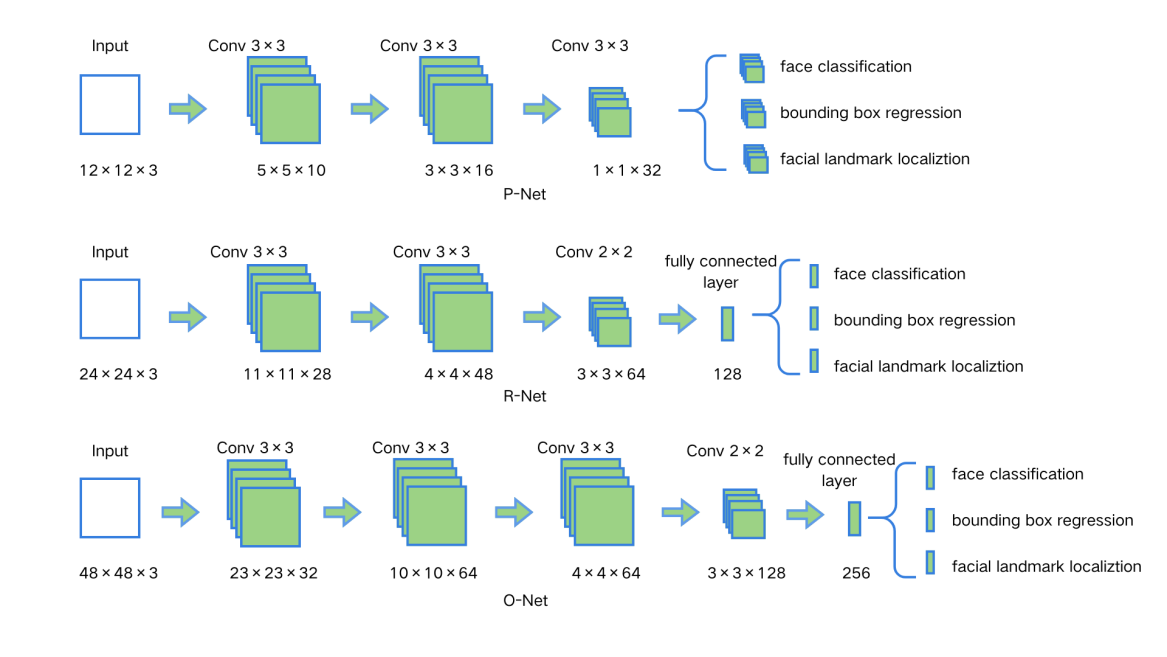

TY - JOUR AU - Huang, Ling AU - Li, Shifeng AU - Man, Yaxin AU - Wang, Xiaoyan AU - Tang, Xiu AU - Ji, Rendong PY - 2026 DA - 2026/03/05 TI - Fatigue Driving Detection via Multi-Head Transformer with Adaptive Weighted Loss JO - ICCK Transactions on Intelligent Systematics T2 - ICCK Transactions on Intelligent Systematics JF - ICCK Transactions on Intelligent Systematics VL - 3 IS - 1 SP - 55 EP - 69 DO - 10.62762/TIS.2025.633754 UR - https://www.icck.org/article/abs/TIS.2025.633754 KW - fatigue driving detection KW - facial keypoints KW - transformer KW - adaptive weighted loss AB - Fatigue driving is widely recognized as one of the major factors contributing to traffic accidents, posing not only a serious threat to road safety but also potential risks to drivers’ health and public security. With the rapid development of modern transportation, how to efficiently and accurately detect and warn against driver fatigue has become a critical issue in the field of intelligent transportation. To effectively address this issue, this paper proposes a novel fatigue driving detection method based on a Multi-Head Transformer with Adaptive Weighted Loss. In the proposed framework, the YOLOv8 model is first employed to efficiently and accurately locate key facial regions of the driver from real-time video streams, ensuring both high-speed processing and robustness. Subsequently, a Multi-Head Transformer model is introduced to capture the temporal dependencies and feature correlations among facial landmarks, enabling a more comprehensive characterization of fatigue-related behaviors such as eye closure, blinking, and yawning. In addition, an Adaptive Weighted Loss function is designed to dynamically balance the contributions of multiple fatigue features during training, effectively alleviating the class imbalance problem and enhancing the model’s generalization capability. Experimental results demonstrate that the proposed method maintains stable and superior detection accuracy even under complex long-duration driving conditions. Compared with traditional approaches, the system improves fatigue detection accuracy by 7.2%, achieving 95.5%, while also satisfying real-time requirements. In summary, this study presents an intelligent, adaptive, and efficient fatigue driving detection framework that provides a reliable theoretical foundation for intelligent warning systems and holds significant value for enhancing road traffic safety. SN - 3068-5079 PB - Institute of Central Computation and Knowledge LA - English ER -

@article{Huang2026Fatigue,

author = {Ling Huang and Shifeng Li and Yaxin Man and Xiaoyan Wang and Xiu Tang and Rendong Ji},

title = {Fatigue Driving Detection via Multi-Head Transformer with Adaptive Weighted Loss},

journal = {ICCK Transactions on Intelligent Systematics},

year = {2026},

volume = {3},

number = {1},

pages = {55-69},

doi = {10.62762/TIS.2025.633754},

url = {https://www.icck.org/article/abs/TIS.2025.633754},

abstract = {Fatigue driving is widely recognized as one of the major factors contributing to traffic accidents, posing not only a serious threat to road safety but also potential risks to drivers’ health and public security. With the rapid development of modern transportation, how to efficiently and accurately detect and warn against driver fatigue has become a critical issue in the field of intelligent transportation. To effectively address this issue, this paper proposes a novel fatigue driving detection method based on a Multi-Head Transformer with Adaptive Weighted Loss. In the proposed framework, the YOLOv8 model is first employed to efficiently and accurately locate key facial regions of the driver from real-time video streams, ensuring both high-speed processing and robustness. Subsequently, a Multi-Head Transformer model is introduced to capture the temporal dependencies and feature correlations among facial landmarks, enabling a more comprehensive characterization of fatigue-related behaviors such as eye closure, blinking, and yawning. In addition, an Adaptive Weighted Loss function is designed to dynamically balance the contributions of multiple fatigue features during training, effectively alleviating the class imbalance problem and enhancing the model’s generalization capability. Experimental results demonstrate that the proposed method maintains stable and superior detection accuracy even under complex long-duration driving conditions. Compared with traditional approaches, the system improves fatigue detection accuracy by 7.2\%, achieving 95.5\%, while also satisfying real-time requirements. In summary, this study presents an intelligent, adaptive, and efficient fatigue driving detection framework that provides a reliable theoretical foundation for intelligent warning systems and holds significant value for enhancing road traffic safety.},

keywords = {fatigue driving detection, facial keypoints, transformer, adaptive weighted loss},

issn = {3068-5079},

publisher = {Institute of Central Computation and Knowledge}

}

ICCK Transactions on Intelligent Systematics

ISSN: 3068-5079 (Online) | ISSN: 3069-003X (Print)

Email: [email protected]

Portico

All published articles are preserved here permanently:

https://www.portico.org/publishers/icck/