ICCK Transactions on Emerging Topics in Artificial Intelligence

ISSN: 3068-6652 (Online)

Email: [email protected]

Submit Manuscript

Edit a Special Issue

Submit Manuscript

Edit a Special Issue

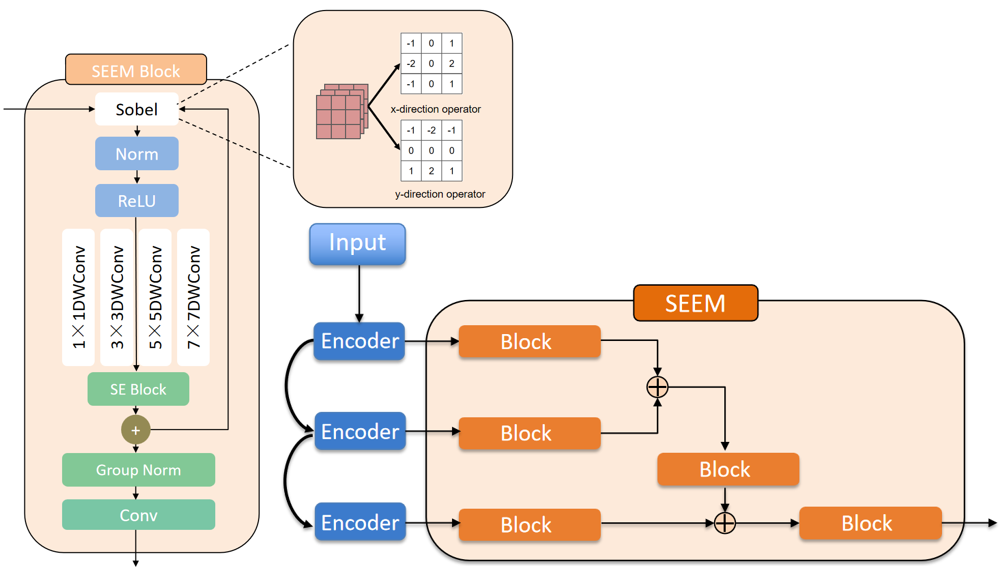

TY - JOUR AU - Su, Tingli AU - Wan, Rui AU - Wang, Senmao AU - Bai, Yuting PY - 2026 DA - 2026/03/06 TI - SEFF-Net: A Hybrid Feature Fusion Network for Accurate Segmentation of Breast Ultrasound Images JO - ICCK Transactions on Emerging Topics in Artificial Intelligence T2 - ICCK Transactions on Emerging Topics in Artificial Intelligence JF - ICCK Transactions on Emerging Topics in Artificial Intelligence VL - 3 IS - 2 SP - 128 EP - 141 DO - 10.62762/TETAI.2026.494190 UR - https://www.icck.org/article/abs/TETAI.2026.494190 KW - breast ultrasound segmentation KW - edge enhancement KW - feature fusion KW - hybrid loss strategy KW - image processing AB - Breast ultrasound imaging plays a crucial role in early breast cancer screening and diagnosis due to its noninvasive nature and cost-effectiveness. However, accurate lesion segmentation remains challenging because of severe speckle noise, low contrast, and blurred tumor boundaries. To address these issues, this paper proposes SEFF-Net, a novel edge-aware feature fusion network with a U-shaped encoder–decoder architecture to capture multi-level semantic representations for breast ultrasound image segmentation task. To enhance boundary perception, a Self-learning Edge Enhancement Module is embedded in the shallow encoding stages, while a Spatial Feature Fusion Module is introduced to effectively integrate multi-scale features by leveraging spatial context, thereby achieving a better balance between low-level spatial details and high-level semantic information.To further alleviate the class imbalance between foreground and background regions and improve boundary learning, a novel joint loss function is designed by combining region-based consistency constraints with boundary-sensitive supervision. This optimization strategy reinforces contour awareness while maintaining overall segmentation accuracy. Experimental results demonstrate that SEFF-Net consistently outperforms state-of-the-art segmentation methods across multiple evaluation metrics, including Dice coefficient, IoU, and boundary-related measures. Overall, SEFF-Net provides an effective and reliable solution for accurate breast ultrasound image segmentation, showing promising potential for clinical computer-aided diagnosis systems. SN - 3068-6652 PB - Institute of Central Computation and Knowledge LA - English ER -

@article{Su2026SEFFNet,

author = {Tingli Su and Rui Wan and Senmao Wang and Yuting Bai},

title = {SEFF-Net: A Hybrid Feature Fusion Network for Accurate Segmentation of Breast Ultrasound Images},

journal = {ICCK Transactions on Emerging Topics in Artificial Intelligence},

year = {2026},

volume = {3},

number = {2},

pages = {128-141},

doi = {10.62762/TETAI.2026.494190},

url = {https://www.icck.org/article/abs/TETAI.2026.494190},

abstract = {Breast ultrasound imaging plays a crucial role in early breast cancer screening and diagnosis due to its noninvasive nature and cost-effectiveness. However, accurate lesion segmentation remains challenging because of severe speckle noise, low contrast, and blurred tumor boundaries. To address these issues, this paper proposes SEFF-Net, a novel edge-aware feature fusion network with a U-shaped encoder–decoder architecture to capture multi-level semantic representations for breast ultrasound image segmentation task. To enhance boundary perception, a Self-learning Edge Enhancement Module is embedded in the shallow encoding stages, while a Spatial Feature Fusion Module is introduced to effectively integrate multi-scale features by leveraging spatial context, thereby achieving a better balance between low-level spatial details and high-level semantic information.To further alleviate the class imbalance between foreground and background regions and improve boundary learning, a novel joint loss function is designed by combining region-based consistency constraints with boundary-sensitive supervision. This optimization strategy reinforces contour awareness while maintaining overall segmentation accuracy. Experimental results demonstrate that SEFF-Net consistently outperforms state-of-the-art segmentation methods across multiple evaluation metrics, including Dice coefficient, IoU, and boundary-related measures. Overall, SEFF-Net provides an effective and reliable solution for accurate breast ultrasound image segmentation, showing promising potential for clinical computer-aided diagnosis systems.},

keywords = {breast ultrasound segmentation, edge enhancement, feature fusion, hybrid loss strategy, image processing},

issn = {3068-6652},

publisher = {Institute of Central Computation and Knowledge}

}

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

Copyright © 2026 by the Author(s). Published by Institute of Central Computation and Knowledge. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. ICCK Transactions on Emerging Topics in Artificial Intelligence

ISSN: 3068-6652 (Online)

Email: [email protected]

Portico

All published articles are preserved here permanently:

https://www.portico.org/publishers/icck/